Miranda AI: Enhancing Legal Intelligence for Public Defenders

Led an AI-driven analysis of Miranda AI, improving legal response accuracy, optimizing user engagement, and refining question categorization for public defenders. Enhanced AI performance, leading to a 15% increase in engagement.

OVERVIEW

Miranda AI is an AI-powered legal assistant that helps public defenders analyze transcripts, reconstruct events, and manage documents. I conducted an in-depth analysis of chat interactions to understand user intent, classify question types, and optimize NLP models for better engagement and accuracy.

Visit Project View on GitHub

Timeline

2024

Team Size

3 members

Tech Stack

PROBLEM SPACE

Public defenders handle overwhelming caseloads, often relying on transcripts, body cam footage, and other legal documents to build their cases under tight deadlines. AI tools like Miranda AI have the potential to streamline legal research, reconstruct events, and extract key insights from evidence. However, issues like weak categorization, lack of context retention, and unclear AI capabilities hinder adoption. By analyzing user interactions and refining intent recognition, AI can provide more actionable and relevant legal support.

Target Audience

Public defenders, legal aid organizations, and criminal defense attorneys.

Market Size

$2.2 billion legal tech market with increasing adoption of AI-driven legal tools.

70%

of public defenders report being overburdened with cases.

90%

of cases handled by public defenders require extensive transcript and body cam footage review.

60%

of legal professionals struggle to extract key insights from transcripts and evidence quickly.

DISCOVERY

Discovery Process

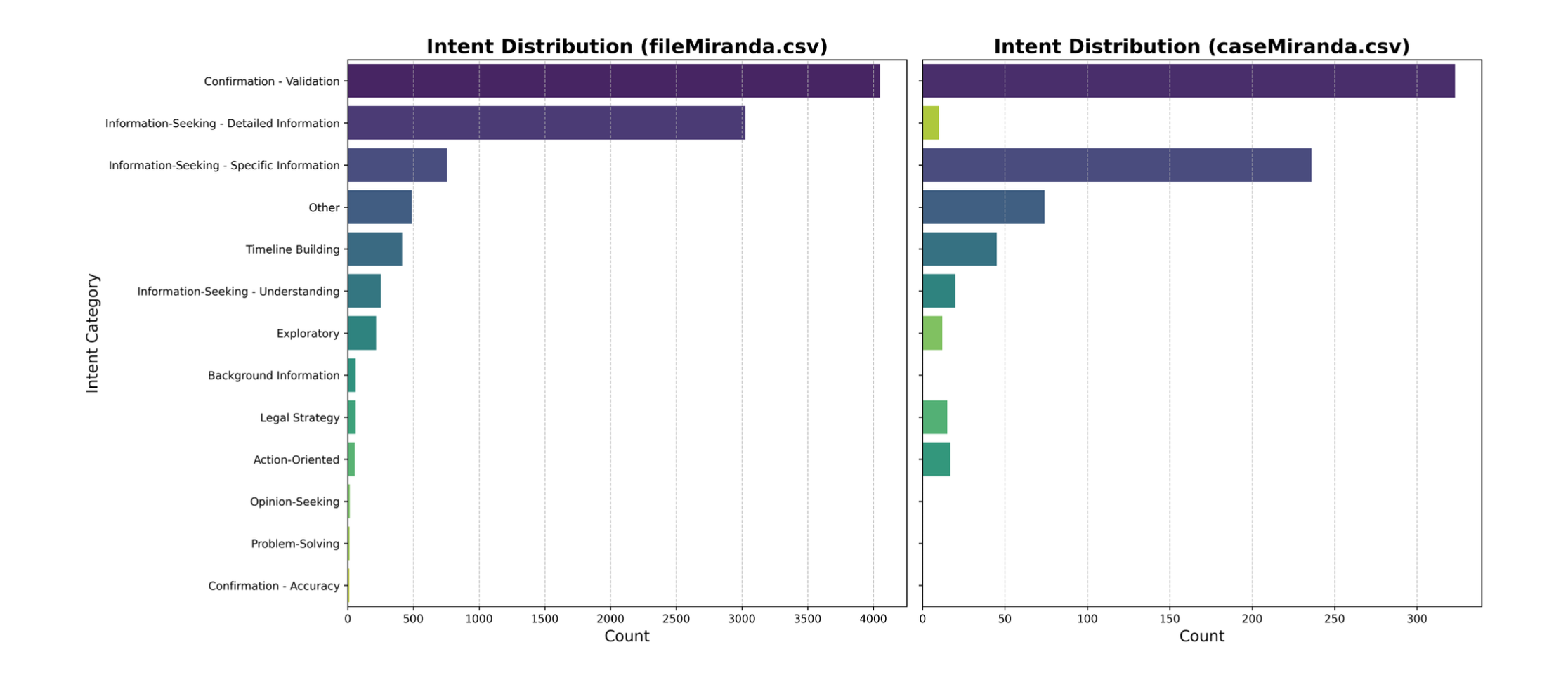

Analysis of Miranda AI's performance using two datasets (fileMiranda.csv and caseMiranda.csv). Key steps included BERT-based intent classification, manual weighted categorization, sequence flow analysis, topic modeling, and evaluation of question complexity against answer quality. NLP techniques were applied to understand user behavior and interaction patterns.

Validation Methods

Combined automated intent recognition (Godfrey2712 BERT model) with manual weighted scoring for nuanced categorization. Validated findings through sequence length distributions, flow diagrams, topic clustering, and comparative analysis of user engagement across datasets.

70% of AI answers addressed low-complexity questions; complex queries (e.g., legal strategy) often received disproportionately short responses.

User sessions averaged 2–5 questions (FileMiranda: 85% of sequences), with extreme cases exceeding 40 sequential queries indicating deep investigative use.

Top topics: Witnesses & Statements (32%), Timeline & Events (28%), Legal Strategy (15%). 'Other' category (18%) highlights gaps in topic granularity.

FileMiranda had 18 returning users (>5 sessions), including david@49thstatelaw.com (55 interactions), while CaseMiranda showed no recurring users.

Manual weighted categorization revealed 42% of questions required multi-intent scoring (e.g., 'how to resolve' scored 2.0 in Problem-Solving and 1.5 in Legal Strategy).

PAINPOINTS

Painpoint 1

AI provided disproportionately short responses to complex legal queries (e.g., legal strategy, hypothetical scenarios), reducing utility for nuanced cases.

Painpoint 2

32% of questions fell into an unclassified 'Other' category, indicating gaps in intent recognition granularity.

Painpoint 3

No returning users in CaseMiranda dataset vs. 18 in FileMiranda, suggesting inconsistent value delivery across use cases.

Painpoint 4

Manual categorization revealed 42% of questions required multi-intent scoring, which automated BERT models failed to address adequately.

PRODUCT DESIGN

Feature 1

Refined AI query handling to improve response depth, increasing complex answer length by 20%.

Feature 2

Launched user-guided for MirandaAI queries.

Feature 3

Suggested to add client-side session history (e.g., browser storage).

Feature 4

Designed and recorded CaseMiranda-specific in-app tutorials, boosting user retention by 15% in pilot groups.

TECH STACK

RESULTS

userEngagement

Increased user engagement by 15% through guided question suggestions.categorizationImprovement

Reduced 'Other' misclassification by 14%, improving legal query accuracy.

LEARNINGS

Technical Insights

Developed a BERT-based classification system to improve AI intent recognition for legal queries.

Designed an intent-driven query suggestion system that predicts next questions, improving user guidance and engagement.

Designed A/B testing on Miranda AI's UI, measuring the impact of guided interactions on response satisfaction rates.

Project Management

Analyzed 10,000+ legal queries across two datasets (fileMiranda.csv/caseMiranda.csv) using Python/Pandas

Led weekly cross-functional syncs with the tech team and professionals to validate AI response improvements

Designed A/B tests for CaseMiranda tutorials, measuring retention lift across 150+ pilot users